Did you know that having too much content on your website actually might be hindering you more than it is helping you? With it being the start of the year, I like to do a full site review of all of my clients, by URL, to make sure all content on their sites is actually pulling its own weight. I often think of this process as employees at your company. Like employees, every piece of content on your website should be pulling its own weight and providing some sort of value. If not, it’s time to rethink its purpose or need. Should you remove it? Rewrite it? How do you get the data you need to make sure you are making the right decisions on what to do?

Well, this post is about just that. I am going to walk you through my entire process of cutting content, from crawling all live URLs to pulling in all of the data.

What do you need to successfully do the review?

Before we begin, I want to ensure that you have the necessary access to tools and data. This will be imperative to be able to pull everything into one spot. Here is the tool stack I use to consolidate all the data into one location, by URL, so you can successfully handle a valuable content prune.

Before we get started, I want to make sure that you have the proper access to tools and data. This will be imperative to be able to pull everything into one spot. Here is the toolstack that I use to get all of the data into one spot, by URL, so you can successfully handle a valuable content prune.

If you do not have a paid version of Screaming Frog, you can go and download it here. The paid versions allows us to link up with APIs that will make pulling the data far easier, with less reliance on Google Sheets equations like vLookup and CountIF (we’ll still use that a little bit later down the line).

Why do you need the paid version?

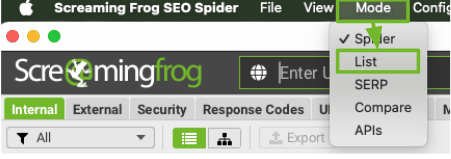

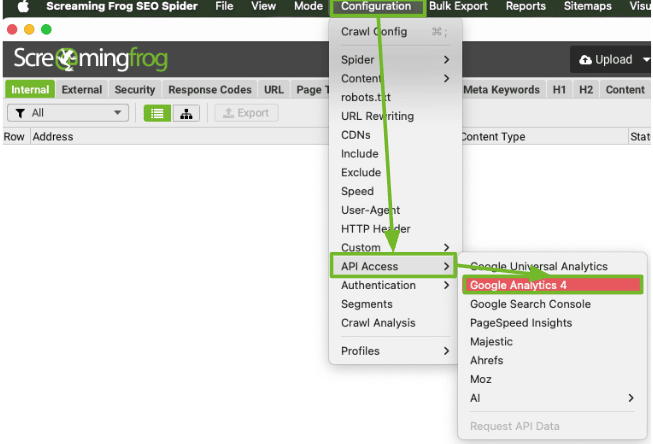

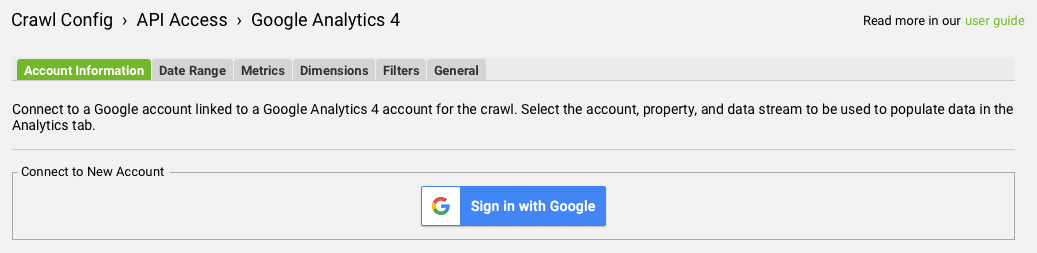

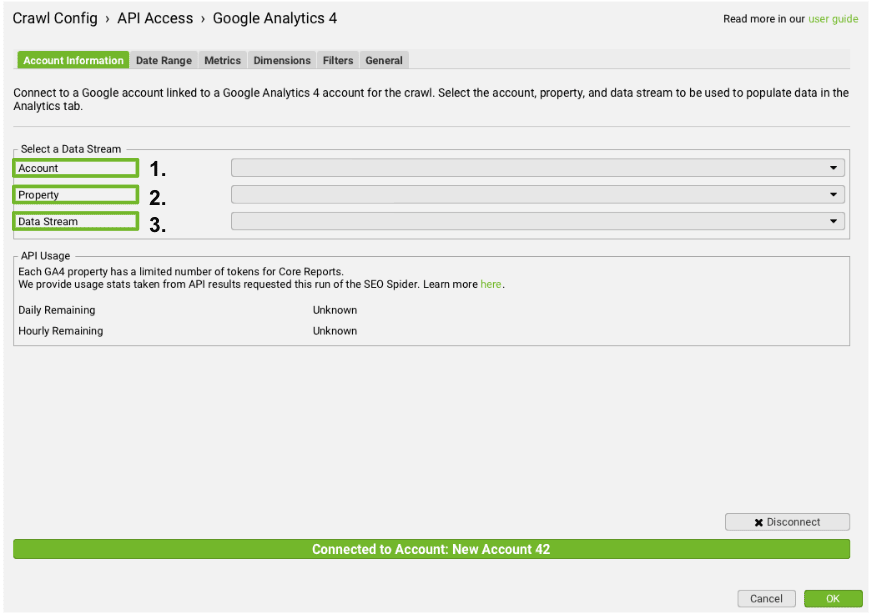

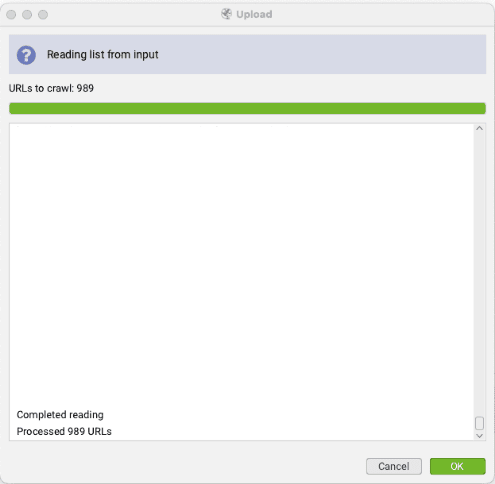

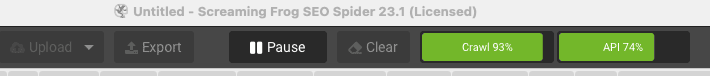

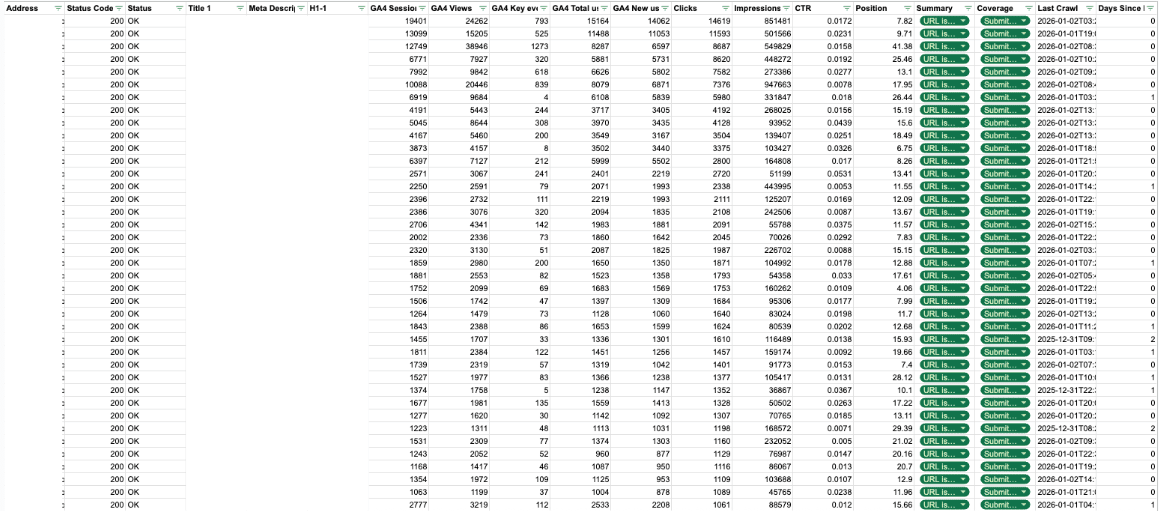

As mentioned, having the paid version will allow us to make sure we can access all of the URLs to crawl (you might have more than 500), it allows you to change the crawl mode so instead of spider, we can use the copy and paste features to crawl XML sitemaps, and then lastly, we will want to link up with Google Analytics and Google Search Console using the API. This will let us pull the data in more easily.

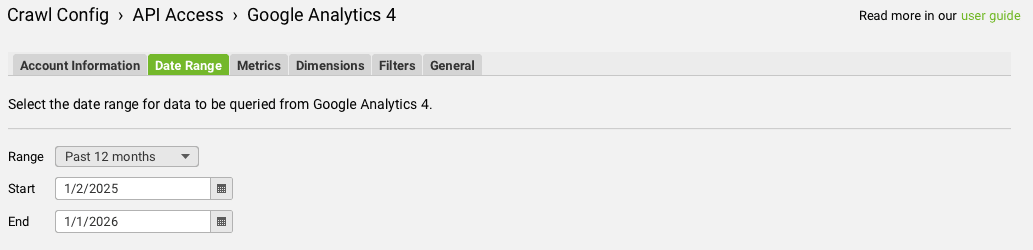

Of course, we want to look at the organic value of each of these URLs so you will need access to the Google Analytics 4 property for the website. You can leverage third-party data from things like Ahrefs or Semrush, but I like using first-party data so that it is more accurate and I am making changes based on real data that we’ve had over the past 12 months.

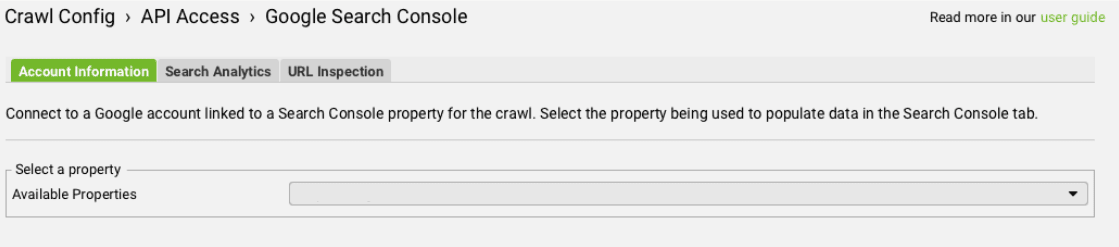

We are going to want to combine our GA4 data with Google Search Console data as well, so that we can get the full picture. Maybe you have a page that has gotten very few clicks and sessions, but it is getting a lot of impressions. Having this data will make it easier for you to make strong, well-informed decisions. A few tweaks to that page could improve it and start to get it ranking higher and gaining more clicks.

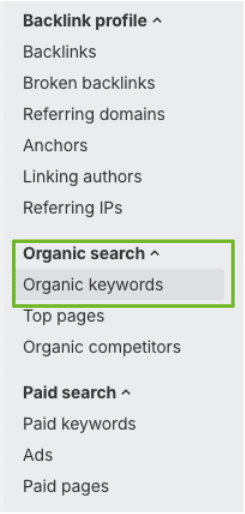

I personally like using Ahrefs, but you can use Semrush as well. What we will do with this information is pull all of the keyword rankings for a website, as well as the total number of backlinks, by URL. It’s important to combine this information with your full data set so that you can see how many total rankings, page 1 rankings, and individual backlinks each URL has.

Crawling With Screaming Frog (Paid)

So, let’s get to it. First, we are going to crawl the entire site with Screaming Frog, using the paid version. Here are the steps to do this quickly and efficiently.

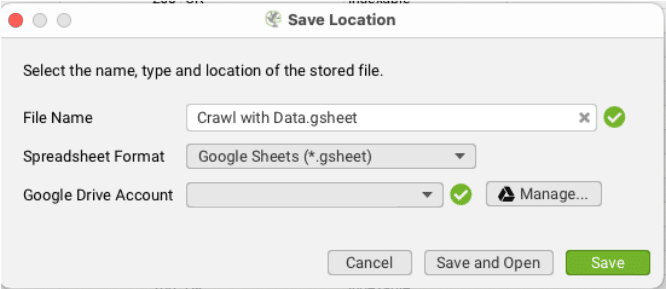

Exporting the data into a spreadsheet (Google Drive)

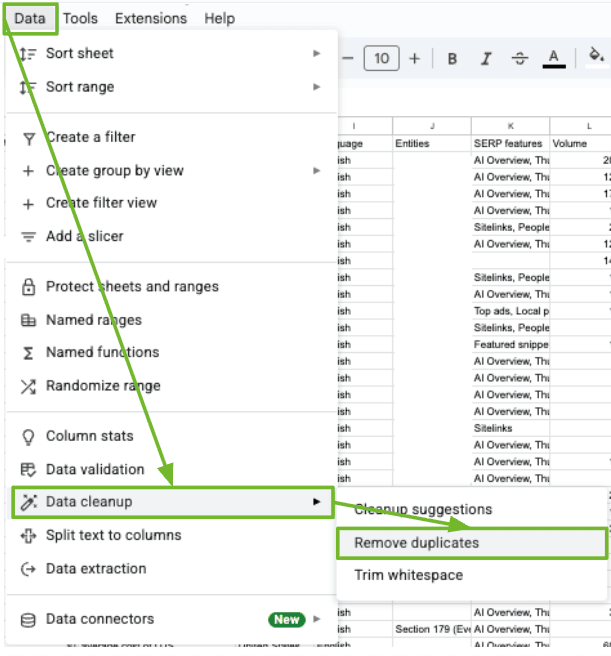

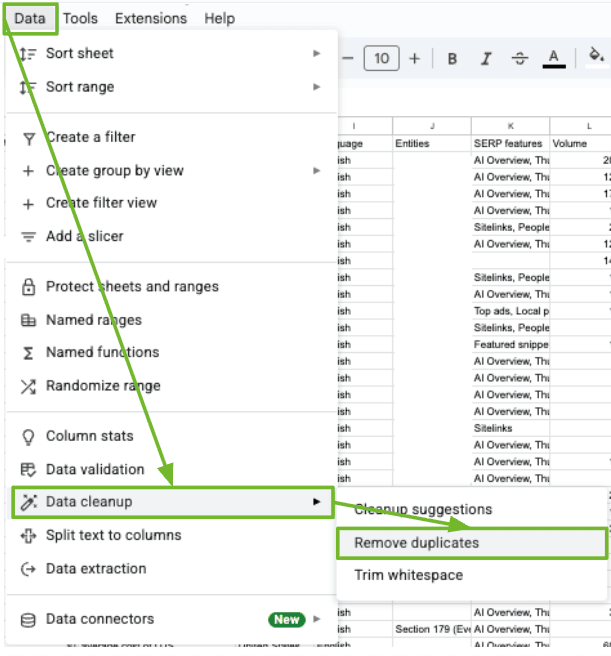

Once the crawl is finished running and the API has done its thing, it’s time to get this information into a place where you can actually analyze it and make some decisions from. I leverage Google Sheets / Google Drive so that the sheet can be easily shared with stakeholders and other people, and version history remains intact.

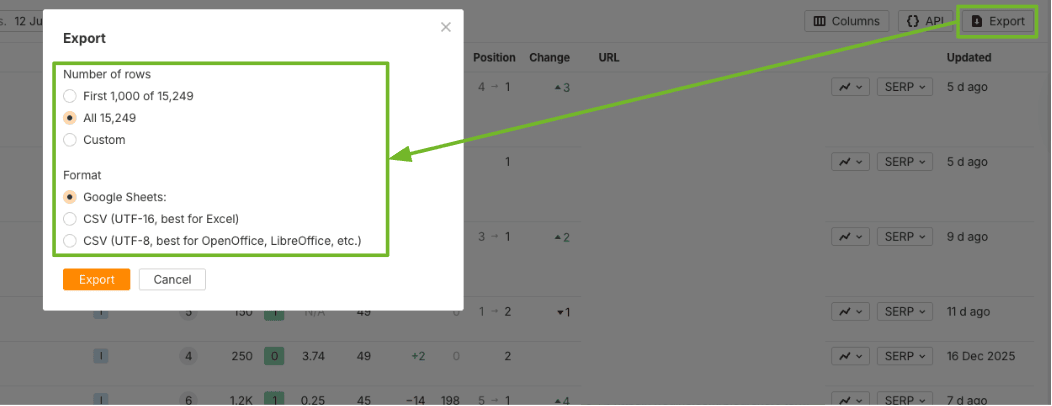

Now that we have the full crawl with data cleaned up and looking good, we are going to go and pull all of the keyword rankings and backlinks for the site, sort by URL, and match with our data set.

Adding keyword rankings (by URL)

While we have all the traffic information, it’s essential to also track the total number of rankings and page 1 rankings for each URL. This will help us paint a better picture overall. I use Ahrefs for this, but you can use your keyword ranking tool of choice.

For some of these formulas, here is a bit more information from Google about what they are and how to leverage them.

Adding Backlinks (By URL)

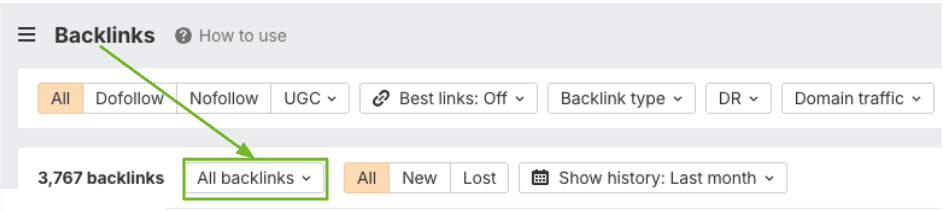

The last piece to this puzzle is going to be pulling in the backlinks that each URL has. We are going to follow a similar process to the keyword rankings one above, but specific to links.

And there you have it! You have now compiled a robust analysis of the organic performance of your website all in one spot. Now what?

Analyzing and Making Decisions

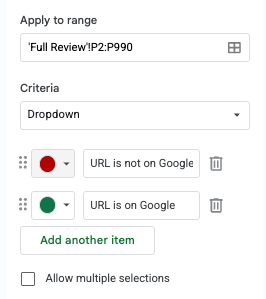

At this point, you now have what most sites rarely ever do: a URL-level performance document that combines 12 months of organic traffic, visibility, all rankings, indexation, and authority signals in one place.

This is where content pruning either becomes a strategic advantage or a dangerous guessing game. The goal here is not to delete content aggressively and haphazardly. Our goal is to make defensible decisions for every URL based on real performance data. Identifying poor performers and content that is indexed and not doing anything is a great place to start for content pruning. If you need a hand making decisions, here’s how I go through my decision-making process with the data.

I bucket every URL into one of four outcomes:

In the Full Review tab, add a filter and sort the GA4 Sessions column from largest to smallest. This will immediately tell you which URLs are actually contributing organic value. For URLs with consistent sessions over the past 12 months, review:

If a page is generating traffic and conversions, it has earned its place on the site. These pages should almost never be deleted or rewritten aggressively.

At most, they may need:

First rule of SEO, always: if it’s working, don’t break it. Or in other words, something I learned early on in my career….”First, Do No Harm!”

Next, sort by Impressions, largest to smallest, and compare against:

These URLs are often your biggest opportunities to improve or even rewrite. Common patterns you’ll see:

This usually indicates an intent mismatch or SERP competition issue, not a content quality failure. These pages are prime candidates for Rewrite / Improve, not pruning.

Typical fixes include:

If Google is showing the page, deleting it is often the wrong move.

Now bring in the keyword columns:

Pages with multiple rankings, even if traffic is low, are still contributing to topical relevance and long-tail coverage. A page with:

…is usually underperforming, not useless.

These URLs often act as:

They belong in the Rewrite / Improve bucket far more often than the prune bucket.

Before pruning anything, review the Backlinks column. If a URL has backlinks pointing to it, deleting it without a plan is risky.

Ask:

Pages with backlinks almost always fall into one of these buckets:

Straight pruning pages with backlinks should be rare and intentional.

Now check:

These columns help confirm whether Google:

Common red flags;

These are often strong prune candidates, assuming they also lack rankings and backlinks.

Once all signals are reviewed together, decisions become straightforward.

Keep As-Is

Minimal changes only.

Rewrite / Improve

These are usually your highest ROI pages.

Consolidate / Redirect

Merge into one strong URL and redirect the rest.

Prune

These pages are dead weight and should be removed. This is where the value starts to come in. Once you are able to identify dead weight, you can start to remove that content and give your more valuable content an even better fighting chance. This is all about crawl budget and keeping the bots on the pages that matter, are performing well, or could be performing better. Not pages that have done nothing for you in a year.

Finally, add one more column to the Full Review tab:

Every URL gets a label:

This turns your spreadsheet into an execution plan, not just an audit.

It also gives you:

Template To Use

Thanks for hanging with me through this post. I know it’s a long one, but there are a lot of moving pieces and a lot of things to review when going through your content pruning plan and strategy. I have created the exact template that I use for all of my content pruning tasks and made it available below. No sense recreating the wheel. We’re all grown-ups, feel free to steal my template and make it fit into your content pruning needs.

Good luck and have fun!

Since 2015, I have been specializing in all areas of SEO ranging from advanced technical SEO to local, enterprise, national, and international SEO campaigns. I’ve helped grow agencies through specialized training curriculums and learning management systems to achieve quality consistency. I have a passion for helping teach people the ins and outs of SEO strategy, tactics, execution, and more!